The Mediacom SSH Issue

Sometimes it’s a miracle the Internet works at all. For the past week or so I’ve been unable to clone, pull from, or push to private Git repositories from either Bitbucket or Github using the normal git clone git@bitbucket.org:whatever/whatevs.git syntax. The problem had the symptoms of a blocked port or a bad network route; I’d issue the command in Terminal and wait, and wait, and wait, and the command would eventually timeout. After a quick look at the Github documentation I tried ssh -T git@github.com which also timed out and confirmed my suspicions. The ssh protocol was not getting through, but VPN and normal web traffic was.

The Future of DevOps is AI

The work of systems administration, that is, racking new hardware, running cables, and loading operating systems, is quickly becoming eclipsed by devops. Servers come from the factory ready to rack, and the base operating system has become nearly meaningless in the context of running applications thanks to Docker. All you need is a baseline Linux install, the specifics of what each application needs to run are taken care of inside the Docker container.

The City Museum

I’m in St. Louis for the Strange Loop conference, and the conference kicked off last night with a party at the City Museum. I didn’t bother to look into the museum much before I came. A few friends told me a bit about it, but it wasn’t enough for me to really understand what the museum was; the City Museum isn’t a museum, its a mad amusement park.

On Computing Tomorrow

I’ve been thinking more about my defense of the Mac as a long-term computing platform, and I’m slowly coming around to understanding that at the base of my ideas is a type of willful ignorance that I should know better than to indulge in. The world is changing, computers are changing, and how we work and interact with them is changing drastically. To get to the root of this, let’s follow the five “whys” of why I need a Mac to work.

Studying in the Pit

I just started reading Cal Newport’s Deep Work and I’ve found myself nodding along in agreement through the introduction and first two chapters. His description of the environment needed for intense, concentrated study reminded me of a time I went through a period of deep work, one that is unfortunately difficult to replicate.

cloudchain

Today, the team I’m a part of at TargetSmart is releasing our first open source project, a bit of Python I like to call “cloudchain”. cloudchain is designed to make it easy to store and retrieve secrets using AWS. cloudchain relies on the AWS Identity and Access Management (IAM) Key Management Service (KMS) to securely store and manage access to encryption keys, and stores the encrypted secret in a DynamoDB table.

Standing Desk Review

For the past two months I’ve been working, on and off, with a Rocelco Height Adjustable Standing Desk Riser, a less expensive choice for working at a standing desk than the popular VARIDESK. The Rocelco is a solid alternative for budget conscious workers, but as with most products, the drop in price comes with a set of trade-offs.

The New Setup

Making The Move From Sysadmin to DevOps

Everyone’s professional path follows a slightly different trajectory. We are each a unique recipe of skills, experience, and interests, which shape who we are and how we come to be in the careers that we have. My experience in moving from a systems administrator to a devops role is unique, because, well, we are all unique.

DevOps & Evolving Systems Administration

The phrase “DevOps” gets thrown around quite a bit, so I thought it might be helpful for me to write down exactly what it means to me. DevOps is the evolution of systems administration. A few years ago, I noticed that the SysAdmin field was finally starting to change, after years of being relatively static. For decades, A sysadmin would set up the hardware, install the operating system, setup SSH (or, telnet in the bad old days), install your application, and get it running. Even when virtualization became more mainstream and worked its way into production workloads, it didn’t change the core tasks of a sysadmin. There were simply more boxes to manage, and without appropriate configuration management, each virtual machine became a unique little snow flake. A few tools became more commonplace like CFEngine, Puppet, or Chef to ease the burden of virtual machine sprawl, but it wasn’t until cloud computing came along that the role of a sysadmin really started to change.

Everything Changes

And everything is changing for me again. The CTO of the company I work for spoke with me yesterday, our office is being shut down and they are laying off the staff. I’ve got till March 1st to find something new.

Standing Around

I was having problems with my lower back, not an uncommon issue, especially for those of us who spend our day staring at a computer screen. My problem was exasperated by my poor posture in my chair. I tend to slouch after a couple of hours, and then slowly slide lower and lower into my chair until, at the last moment before I fall out of it, I reposition myself and sit up again. I also run in the morning, and I rarely have time to stretch properly after a run, a bad habit that needs to be addressed. By the end of the day I’d stand up and crack my lower back three or four times, and know that if I turned in the wrong way I would be out of commission for a week or so while my back untwisted itself.

The Million Monkeys

Computers, the bicycles for the mind, the idea engines; when we work at a computer we open the door to limitless avenues of creativity. Cracking open the lid of a laptop can be the first step to writing a novel, starting a new career, or getting in touch with long lost friends. But, when the machines misbehave, when they don’t perform as expected or present their interface in ways that are difficult or impossible to decipher, even the most mundane of tasks become a chore. The possibilities for the future melt away under the perception that computers are difficult and unreliable, our untrustworthy opponent to getting things done.

A Different Vision of the Future

I ran across a few articles in the past week or so that predict the majority of the population will be living in cities by 2050.1 I don’t dispute the projection, these people generally know what they are talking about, but I would like to do a bit of daydreaming of my own. I can envision a world of small towns populated by remote workers and independent service providers, communities with relationships that are closer, deeper, and happier than their city dwelling counterparts.

-

There are several others. Just do a search for “seven billion live in cities in 2050” for more. ↩

The Hardware Racket

Every now and then something just gets to me, and for the past few weeks, that something has been the process of purchasing enterprise hardware. Servers, SANs, load balancers, the kind of equipment that, instead of a price and an “Add to Cart” link, comes with directions on who to call.

Cutting Corners

After reading the MacSparky piece on craftsmanship, I’m reminded of how I like to look at my career as a systems administrator. I find that there are times when things that are not quite right just bother me. Like when there are inconsistencies or one-offs scattered throughout the environment I am responsible for. There may well be perfectly logical reasons why some systems are monitored and some are not, why some are registered with configuration management and others are not, but in my mind it is these little inconsistencies that add up and make your work look sloppy.

A New World

CocoaHeads changed my life. This afternoon I am killing time in a coffee shop, about to head to work for an appointment with HR. When I get there, I’ll turn in my badge, they will wish me luck, and I’ll walk out the door. Monday, I start a new chapter in my life with T8 Webware. To say that I’m a little nervous about this change would be an understatement. I’ve spent time with these guys, they are smart, ambitious, and I believe in what they are doing. I’m going to be part of building something awesome, and I’m extremely excited.

Mandatory

My workplace is adopting Agile methodologies for our development and client relations departments. As part of the adoption, it was decided that all of IT would attend a three hour overview of what Agile is and why it was important. This is all fine and well, but in making the training mandatory, instead of optional, the organizers lost a good deal of opportunity.

Dazzle Them With Science

It’s not really a science, it’s more of an art. If you are careful, and attentive, you can see when someone starts working this particular art form. In a technical discussion, bit by bit, you start getting lost in the conversation, wondering how we got on to this topic, when it doesn’t have anything to do with what needs to be accomplished. Then you realize that the same guy has been talking for the past few minutes, and he’s been working his art, casting his spell, and the whole room has fallen under it. He’s convinced everyone in the room that he knows so much more, that his knowledge on the topic is so vastly superior to anyone present that no one is on the same level. Which is exactly where he wants your mind to be, because the next step after that is agreeing with whatever he wants to do.

The end of the IT department

37 Signals comments on a trend I’ve been noticing for a few years. Data centers and IT departments are not the core competency of most businesses, they are a requirement of operating the business. Or, at least, they have been for the past thirty years or so. Businesses are now seeing the benefits of moving what they are not good at, controlling IT, to what they are good at, which is whatever makes them money.

Be Great

If you take a moment to look around the room you are in now, what do you see? Are you surrounded by things that matter, and were built by people who care? Or, more likely, are you surrounded by mass produced, assembly line, imported goods that you honestly don’t believe will last all that long? I’ve been thinking about quality again, and how it applies to me, to what I do, and how I spend my time.

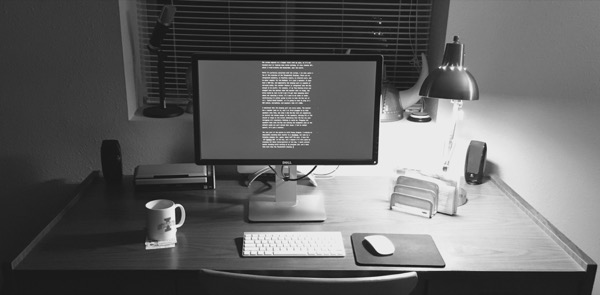

Clean and Clutter Free

I like to keep both my desk and my computer desktop clean and clutter free. I’ve found that when there is less visual noise, I’m able to better concentrate and focus. In the article “The Proximity Compatibility Principle: Its Psychological Foundation and Relevance to Display Design”, Wickens and Carswell outline scientific principle’s that back up my personal preference.

Quality

We’ve been having a months long discussion at work around which Linux OS to use. It’s all come to a head recently, and it looks like the winner is going to be Red Hat. The decision leaves a slightly sour taste in my mouth, but over the course of the past year I’ve gotten used to having it around. While trying to understand why I’ve got such a dislike for this particular flavor of Linux, I thought it might help to take another look at OpenBSD.

Add a User - Send an Email

I was asked on Twitter the other day why I disliked IBM’s enterprise software. This, in addition to my previous TWS rant, is my answer to that question.

New SysAdmin Tips

My answer to a great question over at serverfault.

First off, find your logs. Most Linux distros log to /var/log/messages, although I’ve seen a couple log to /var/log/syslog. If something is wrong, most likely there will be some relevant information in the logs. Also, if you are dealing with email at all, don’t forget /var/log/mail. Double-check your applications, find out if any of them log somewhere ridiculous, outside of syslog.

Brush up on your vi skills. Nano might be what all the cool kids are using these days, but experience has taught me that vi is the only text editor that is guaranteed to be on the system. Once you get used to the keyboard shortcuts, and start creating your own triggers, vi will be like second nature to you.

Read the man page, and then run the following commands on each machine, and copy the results into your documentation:

cat /etc/*release*

cat /etc/hosts

cat /etc/resolv.conf

cat /etc/nsswitch

df -h

ifconfig -a

free -m

crontab -l

ls /etc/cron.d

echo $SHELLThat will serve as the beginnings of your documentation. Those commands let you know your environment, and can help narrow down problems later on.

Grep through your logs and search for “error” or “failed”. That will give you an idea of what’s not working as it should. Your users will give you their opinion on whats wrong, listen closely to what they have to say. They don’t understand the system, but they see it in a different way than you do.

When you have a problem, check things in this order:

-

Disk Space (df -h): Linux, and some apps that run on Linux, do some very strange things when disk space runs out. It may seem unrelated, until you check and find a filesystem 100% full.

-

Top: Top will let you know if you’ve got some process that’s stuck out there eating up all of your available CPU cycles. Nothing should consume 99% CPU for any extended period of time. If its a legitimate process, it should probably fluctuate up and down. While you are in top, check…

-

System Load: The system load should normally be below 3 on a standard server or workstation. The system load is based on CPU, memory, and I/O.

-

Memory (free -m): RAM use in Linux is a little different. It’s not uncommon to see a server with nearly all of its RAM used up. Don’t Panic, if you see this, it’s mostly just cache, and will be cleared out as needed. However, pay close attention to the amount of swap in use. If possible, keep this as close to zero as you can. Insufficient memory can lead to all kinds of performance problems.

-

Logs: Go back to your logs, run tail -500 /var/log/messages more and start reading through and seeing what’s been going on. Hopefully, the logs will be able to point you in the direction you need to go next.

A well maintained Linux server can run for years without problems. We just shut one down that had been running for 748 days, and we only shut it down because we had migrated the application over to new hardware. Hopefully, this will help you get your feet wet, and get you off to a good start.

One last thing, always make a copy of a config file you intend to change, and always copy the line you are changing, and comment out the original, adding your reason for changing it. This will get you into the habit of documenting as you go, and may save your hide 9 months down the road.

Linux Hidden ARP

To enable an interface on a web server to be part of an IBM load balanced cluster, we need to be able to share an ip address between multiple machines. This breaks the IP protocol however, because you could never be sure which machine will answer for a request for that IP address. To fix this problem, we need to get down into the IP protocol and investigate how the Address Resolution Protocol or ARP, works.

SLES and RHEL

Comparing two server operating systems, like SuSE Linux Enterprise Server (SLES) and RedHat Enterprise Linux (RHEL), needs to answer one question, “what do we want to do with the overall system”? The version of Linux running underneath the application is immaterial, as long as the application supports that version. It is my opinion that we should choose the OS that supports all of our applications, and gives us the best value for our money.

The Unix Love Affair

There’s been times when I’ve walked away from the command line, times when I’ve thought about doing something else for a living. There’s even been brief periods of time when I’ve flirted with Windows servers. However, I’ve always come back to Unix, in one form or another. Starting with Solaris, then OpenBSD, then every flavor of Linux under the sun, to AIX, and back to Linux. Unix is something that I understand, something that makes sense.

Back in ‘96 when I started in the tech field, I discovered that I have a knack for understanding technology. Back then it was HF receivers and transmitters, circuit flow and 9600 baud circuits. Now I’m binding dual gigabit NICs together for additional bandwidth and failover in Red Hat. The process, the flow of logic, and the basics of troubleshooting still remain the same.

To troubleshoot a system effectively, you need to do more than just follow a list of pre-defined steps. You need to understand the system, you need to know the deep internals of not only how it works, but why. In the past 13 years of working in technology, I’ve found that learning the why is vastly more valuable.

Which brings me back to why I love working with Unix systems again. I understand why they act the way that they do, I understand the nature of the behavior. I find the layout of the filesystem to be elegant, and a minimally configured system to be best. I know that there are a lot of problems with the FSH, and I know that it’s been mangled more than once, but still. In Unix, everything is configured with a text file somewhere, normally in /etc, but from time to time somewhere else. Everything is a file, which is why tools like lsof work so well.

Yes, Unix can be frustrating, and yes, there are things that other operating systems do better. It is far from perfect, and has many faults. But, in the end, there is so much more to love about Unix then there is to hate.

Slowly Evolving an IT System

We are going through a major migration at work, upgrading our four and a half year old IBM blades to brand spanking new HP BL460 G6’s. We run a web infrastructure, and the current plan is to put our F5’s, application servers, and databases in place, test them all out, and then take a downtime to swing IPs over and bring up the new system. It’s a great plan, it’s going to work perfectly, and we will have the least amount of downtime with this plan. Also… I hate it.

The reason I hate it has more to do with technical philosophy then with actual hard facts. I prefer a slow and steady evolution, a recognition that we are not putting in a static system, but a living organism who’s parts are made up of bits and silicone. What I’d like to do is put in the database servers first, then swing over the application servers, and then the F5, which is going to replace our external web servers and load balancers. One part at a time, and if we really did it right, we could do each part with very little downtime at all. However, I can see the point in putting in everything at once, you test the entire system from top to bottom, make sure it works, and when everyone is absolutely certain that all the parts work together, flip the switch and go live. But… then what.

What about six months down the road when we are ready to add capacity to the system, what about adding another database server, what about adding additional application servers to spread out the load, what about patches?

Operating systems are not something that you put into place and never touch again. IT systems made up of multiple servers should not be viewed as fragile, breakable things that should not be touched. We can’t set this system up and expect it to be the same three years from now when the lease on the hardware is up. God willing, it’s going to grow, flourish, change.

Our problems are less about technology, and more about our corporate culture.

Teach A Man To Fish

As a general rule, I really don’t like consultants. Not that I have anything against any of them personally, it’s just that as a whole, most consultants I’ve worked with are no better than our own engineers and administrators. The exception that proves this rule is our recent VMWare consultant, who was both knowledgeable and willing to teach. Bringing in an outside technical consultant to design, install, or configure a software system is admitting that not only do we as a company not know enough about the software, we don’t plan on learning enough about it either. Bringing in a consultant is investing in that companies knowledge, and not investing in our own.

Regarding OS Zealotry

Today I found myself in the unfortunate situation of defending Linux to a man I think I can honestly describe as a Windows zealot. I hate doing this, as it leads to religious wars that are ultimately of no use, but it’s really my own fault for letting myself be sucked into it. It started when we were attempting to increase the size of a disk image in vmware, while Red Hat guest was running. It didn’t work, and we couldn’t find any tools to rescan the scsi bus, or anything else to get Linux to recognize that the disk was bigger. I was getting frustrated, and the zealot began to laugh, saying how easy this task was in Windows. Obviously, I felt slighted since I’m one of the Unix admins at $work, and decided I needed to defend the operating system and set of skills that pays the bills here at home. And so, we started trading snide remarks back and forth about Linux and Windows.

Spit and Polish

After spending a week with Linux as my sole computer, I find it very refreshing to come back home to my Mac. FedEx says my wife’s PC should be here tomorrow, so she can go back to Word 2007 and I can have Mactimus Prime back. It’s not that I didn’t enjoy working with Linux, I did, but I’ve found that once the geeky pleasure of discovering something new wears off, there are problems.

Communications

Ask any mechanic, machinist, or carpenter what the single most important thing that contributes to their success is, and what they are bound to tell you is “having the right tool for the job”. Humans excel in creating physical tools to accomplish a certain task. From hammers to drive a nail to the Jaws of Life to pry open a car, the right tool will save time, money, and frustration. It’s interesting to note the contrast that people have such a hard time with conceptual tools. Software designed to accomplish a specific task, or several tasks, is often forced into a role that it may not fit into, making the experience kludgy, like walking through knee deep mud.

I’ve found this problem to be especially prevalent in business environments, where the drive to “collaborate” brings many varied and sundry applications into the mix. While hurrying to the next great collaboration tool they forget to ask the absolute most important question: What do we need this software to do?

To communicate effectively in a business environment, it is imperative to use the right tool for the job. To determine what the right tool for the job is, you first have to ask yourself exactly what the job is. Do you need ask a quick, immediate question from a co-worker? Is there a more detailed project question that you need to ask, and maybe get the opinions of a few others too? Do you have something that you need to let a large group of people know, maybe even the entire company? Each of these tasks is best suited to a different tool. Unfortunately, I’ve most often seen each of these tasks shoe-horned into email.

Email is a personal communications medium, best suited for projects or questions that you do not need immediate responses to. There have been many times when I’ve gone through my inbox and found something that didn’t grab my attention when it was needed, and by the time I looked at it was past due. Email is asynchronous, ask a question, and wait for a response whenever the receiving party has time.

If you do need immediate response, the best tool would be instant messaging. IM pops up and demands the receiving person’s attention. When requesting something via IM, the person on the other end has to make a decision either to ignore you or to answer your question. Long explanations are not a good fit for IM, but short, two or or three sentence conversations are perfect or it.

When considering sending something out to the entire company, keep in mind that email is a personal communications medium. Company wide email blasts are impersonal, and for the most part require no action on the part of the receiver other than to eventually read it. A better tool for this job is an internal company blog, accompanied by an RSS feed. RSS is built into all major browsers now, and could be built into the base operating system build for PCs. Every single time I get something from our company green team or an announcement from the CEO, I can’t help but think that a blog would be the best place for such formal, impersonal communications. A blog could be archived for searching, new announcements broadcast through RSS, and best of all accessible when the intended receiver has the time and attention to best appreciate the content. To me, company wide email is the same thing as spam, and for the most part, is treated as such.

One other form of internal communications which is far, far too often maligned beyond recognition is shared documentation. Technical documentation especially suffers from document syndrome, which is having a separate (often) Word file for each piece of documentation, spread out through several different directories on Windows shares, or worse on the local hard drive of whoever wrote it. Such documentation should be converted to a wiki as soon as possible. If you are writing a book, I hear Word is a fairly good tool to use. If you are writing a business letter, again, great tool. If you are documenting the configuration of a server, a word processor should not be launched. Word (or OpenOffice) documents have a tendency to drift, and are difficult to access unless you are on the internal network. Trying to access a windows share from my Mac at home through the VPN is something that I’ve never even considered trying. A wiki, is perfectly suited for internal documentation. They are a single central place to organize all documents in a way that makes sense, is accessible from a web browser, easy to keep up to date, and most importantly, searchable. Need to know how to set up Postfix? Search the internal wiki. Need to know why this script creates a certain file? Search the internal wiki. Everything should be instantly searchable. Perhaps search is not the most important feature of a wiki, perhaps the most important is the ability to link to other topics according to context. I can get lost in Wikipedia at times, jumping from one link to another as I explore a topic. The same thing can happen to internal documentation. This script creates this document for this project, which is linked to an explanation of the project, which contains links to other scripts and further explanation. Creating the documentation can seem time consuming, until you are there at 2AM trying to figure out why a script stopped working. Then the hour spent writing the explanation at 2PM doesn’t seem so bad.

One of the first things I did when starting my job was to set up both a personal, internal blog and a shared wiki for documentation. I used Wordpress for the blog, and MediaWiki for the wiki. Both are excellent tools, and very well suited for their purpose. If I were a manager, I’d encourage my team to spend 15 minutes or so at the end of the day posting what they did for the day in their personal blog. Could you imagine the gold mine you’d have at the end of a year or so? Or the resources for justifying raises. Solid documented experience with products and procedures, what works and what doesn’t. The employee’s brain laid out.

Internal moderated forums is something that I haven’t tried yet, but I can see benefits to this as well. They’ve been used on the Internet for years, and I can imagine great possibilities there, especially for problem resolution. How about a forum dedicated to the standard OS build of PCs? Or one for discussing corporate policies?

Blogs, wikis, forums, and IM are tools who’s tasks are far too often wedged into email. If an entire organization begins to leverage using the right tool for the job, the benefits would soon become apparent. Then you’d wonder why you ever did it the other way at all.

Shell Script Style

My co-worker and I spent the better part of yesterday afternoon going through a former employee’s shell scripts to try to determine what they were and what he was trying to do. The script worked, for a while, but there were several mistakes. The mistakes were not in strict syntax, they were in style. Here are a few simple rules to follow to write great scripts:

-

Always, always, always start off each and every script with a shbang line:

#!/bin/sh. Starting off your script with this line tells your shell where to find the interpreter for the commands in the script. Without this line, the script is using your user’s existing shell, the one you are typing in at the moment. This is bad because you are sharing environmental variables, and maybe changing environmental variables outside of your script, and not keeping it self contained and portable. -

Keep your script self contained: If at all possible, try to avoid writing files in different directories. Or, even better, try to avoid writing files at all. Use variables when you can, write files when you have to.

-

Avoid sourcing other scripts or files containing functions: I read about this method in Wicked Cool Shell Scripts, but I disagree that it is as useful as they say. Writing a custom function to send an email is a great idea. separating it out of the script you are working on at the time is not. Again, keep the script self contained. There are obvious exceptions to this rule. If your function is over 50 lines of code, and reused in multiple other scripts, then by all means, source it. If your function is 10 lines, create a vi shortcut for it and add it to the top of the script.

-

Comment tasks: Each block of code in your shell script is meant for a specific task. Add a comment for this block. Make it easy to read, and simple to understand. Assume that you will not work there forever, and someone else will need to read your code and make sense out of it. Also assume that in a year, you will forget everything you did and why you did it and need a reminder.

-

Keep it simple: Scripts should flow logically from top to bottom. If you are creating functions, make it obvious using a comment. Reading a script should be as easy as reading a book, if it’s not, then you are intentionally making things overly complicated and difficult to read.

-

End each script with

#EOF: This is purely a matter of taste, but I find it adds a nice closure to the script.

The easiest thing to do is to create another script who’s purpose in life is to create new scripts. Couple this script with a vi shortcut (mine is ,t) to create the skeleton of the script and you can quickly create powerful, well formatted, easy to read scripts. Here’s an example of mine:

#!/bin/sh

#

# scripty.sh: This script creates other scripts

# Created: 25 Feb. 2009 -- inbound@jonathanbuys.com

#

##############################################################

# A place for variables

VAR1="Set any variables at the top"

DATE=`date`

ANDTHEN="Whatever"

# A place for functions

some_func(){

echo `date`

echo "Whatever's Clever!"

}

# Get down to writing the script

echo $VAR1

echo $DATE

echo $ANDTHEN

some_func

# etc...

##############################################################

#EOF

This article doesn’t talk about syntax, only style. There’s plenty of help with syntax available on the Intertubes. Also, this is my style, as you progress as a sysadmin or scripter of some sort or another, you are bound to come up with your own style that suits you. My style is based on the documentation at grox.net. My style has evolved over time, as will yours, but this is a good place to start.

Systems Administrator

From time to time I’m asked by members of my family or friends of mine outside the tech industry what it is that I do for a living. When I respond that I’m a sysadmin, or systems administrator for Linux and UNIX servers, more times than not I get the “deer in the headlights” look that says I may as well be speaking Greek. So, for a while, I’ve taken to saying “I work in IT”, or “I work with computers, really big computers” or even “I’m a computer programmer”, which isn’t exactly accurate. Although I do write scripts, or even some moderate perl, I’m still not officially a programmer. I’m a systems administrator, so, let me try to explain, my dear friends and family, what it is I do in my little box all day.

First, some basics, let’s start at square one. Computers are comprised of two parts, hardware and software. Sort of like the body and soul of a person. Without hardware, software is useless, and vice-versa. The most basic parts of the hardware are the CPU, which is the brain, the RAM, which is the memory, the disk, which is a place to put things, and the network card, which lets you talk to other computers. For each of these pieces of hardware there needs to be some way to tell them how to do what they are intended to do. Software tells the hardware what to do. I forgot two important pieces of hardware: the screen and the keyboard/mouse. They let us interact with the computer, at least until I can just tell it what to do Star Trek style.

Getting all of these pieces of hardware doing the right thing at the right time is complicated, and requires a structured system, along with rules that govern how people can interact with the computer. This system is the Operating System (OS). There are many popular operating systems: Windows, OS X, and Linux are the big three right now. The OS tells the hardware what to do, and allows the user to add other applications (programs) to the computer.

Smaller computers, like your home desktop or laptop have network cards to get on the Internet. The network card will be either wired or wireless, that doesn’t really matter. When you get on the Internet, you can send and receive information to and from other computers. This information could be an email, a web page, music, or lots of other media. Most of the time, you are getting this information from a large computer, or large group of computers that give out information to lots of home computers just like yours. Since these computers “serve” information, they are referred to as Servers.

Large servers are much like your home computer. They have CPU, RAM, disk, etc… They just have more of it. The basics still apply though. Servers have their own operating system, normally either Windows, Linux or UNIX. Some web sites or web services (like email) can live on lots of different servers, each server having its own job to do to make sure that you can load a web page in your browser. To manage, or “administer” these servers is my job. I administer the system that ensures the servers are doing what they are supposed to do. I am a systems administrator. It is my responsibility to make sure that the servers are physically where they are supposed to be (a data center, in a rack), that they have power and networking, that the OS is installed and up to date, and that the OS is properly configured to do its job, whatever that job may be.

I am specifically a UNIX sysadmin, which means that I’ve spent time learning the UNIX interface, which is mostly text typed into a terminal, and it looks a lot like code. This differs from Windows sysadmins, who spend most of their time in an interface that looks similar to a Windows desktop computer. UNIX has evolved into Linux, which is more user friendly and flexible, and also where I spend most of my time.

Being a sysadmin is a good job in a tech driven economy. I’ve got my reservations about its future, but I may be wrong. Even if I’m not, the IT field changes so rapidly that I’m sure what I’m doing now is not what I’ll be doing 5-10 years from now. One of these days, maybe I’ll open a coffee shop or a restaurant, or I’ll finally write a book.

JeOS

For better or worse, we are starting to put Ubuntu JeOS images into production in our network. Starting off, we will only put these systems in for our non-IBM services, no WebSphere or DB2, as IBM doesn’t officially support this configuration yet, but for everything else, JeOS looks like a perfect fit.

Consulting in Coralville

In 2007 I spent two months working as a network engineer for a small tech consulting company. The work there was amazing. They had built a long range, city-wide wireless network, and were providing broadband to rural areas. They were also providing a “one stop shop” for everything IT for small businesses in town. The people who built this business were energetic and bright, and I was lucky to have worked there. I could have stayed there longer, made a career out of it, or perhaps launched my own solo career from there. That’s not what happened, I left after two months. The reason: I was scared to death.

The Sorry State of Enterprise Software

I’ve been unlucky enough to be working with quite a few pieces of so called “enterprise” software, the worst of which I’ve been working with lately is called the Tivoli Workload Scheduler. TWS is, at its core, a glorified cron. It is a scheduler, you can create jobs, or scripts, and have them executed at given times. You are supposed to be able to cascade jobs, and create dependencies between jobs. This is all well and good, but there are some serious problems with this software.

The first problem is the price. List price for TWS is $33/value unit. IBM bases its pricing scheme on how many CPU cores are in the server that you install their software on, 100 value units per single core CPU, and 50 value units per core for dual or quad core CPUs. So, if you have four servers, and each server has four quad-core cpu’s in them, that comes out to around $26,400. I think we just went ahead and bought 1000 value units up front. That’s a fairly good sized amount, and that does not include the cost of the consultant its going to take to install, configure, and actually use the software.

Why tie the cost of the software to the number of cores in the system? TWS doesn’t use CPU resources to actually do any work, it passes off the work to other applications, TWS simply schedules them to be run. The price would almost be bearable, if the software actually worked. For $26,000 I’d think that it ought to make me coffee and pancakes in the morning. The reality is that after several months of enduring the software, it still doesn’t work properly.

The end user of the system has been trying to add event rules that fire off an email if a job doesn’t end correctly. Wow, that’s like, what… one line of shell script? But, since this is the TWS, we have to put in a call to IBM. IBM will call back, and ask for a ton of information. They’ll ask for directories that don’t exist, ask you to run commands that may or may not work, and generally take up a lot of time. Meanwhile, I’m starting to think that we are actually beta testing this software for IBM, and they just didn’t bother to tell us.

And then there’s the user interface. The UI, like many IBM applications, is quite obviously built on Java, evidenced by the length of time it takes to launch. Once it is launched, there are cascading left to right areas of a single window that allow you to perform separate tasks. At $work, I’ve got a 22” monitor, and this is the only application that I expand to full screen. It needs it. The application, called the “Job Scheduling Console” provides it’s own tabbed MDI interface. It is extremely confusing. Part of the confusion is that evidently the developers decided that there were too many options in the man application window, and chose to add a second interface to TWS through it’s integrated WebSphere application server. The second interface, also Java, is accessed through a web browser. Unfortunately, not just any web browser, it seems to only support Internet Explorer. I tried to access it first through Chrome, which did not work at all, and then through Firefox, which almost worked, but there were pieces of the application missing. IE worked well. The web interface is just as jumbled as the fat client on the desktop. Buttons seemingly randomly placed, some options hidden in drop down menus and others placed either above or below the data.

There is no clear, obvious method to accomplish anything with this user interface.

And that is not all my friends, oh no, that is not all. You must also have access to the command line on the server where TWS is installed. Even on the command line TWS is not a good citizen. There is no man page or online help shipped with the application, you have to load a ton of special environmental variables, and they provide scripts that launch a faux-shell that only accepts certain commands. One such command, conman, offers the ability to view the logs in real time (why, for the love of God, do you not log everything to syslog?), but only if you enter the command “con se” at the conman prompt. Also, you should enter “lev=4” to make sure you get all the logs. Proper logging in an application can be a lifesaver, and it could have been an area where TWS could redeem itself somewhat. That is not what has happened. The “con se” command only works sometimes. Other times it simply says that it submitted that command to be processed and returns you to your prompt. Great, thanks… so where’s my logs?

Having multiple interfaces to the application is fine, if you could accomplish everything needed in any one interface. However, that is also not the case. You need all three, and the end user must switch between the web interface and the fat client, and I as the administrator must switch between the web client, the fat client, and the command line to try to coax this monster into doing what it is supposed to do. Which is… schedule jobs. That’s really all this is supposed to do, schedule jobs to run. I don’t think it should be this hard.

Take these points into consideration in the light of the cost of the application. Now, let your jaw slowly close and realize that IBM can charge this much because it has found a market that no one else is tapping. TWS is only one example of horrible “enterprise” software, there’s a lot more of it out there. Personally, I see an opportunity here. An opportunity for well thought out, beautifully crafted software that works well, is easy to use, and gets the job done.

Visual Thinking

The ability to visualize a complex system is key to a real understanding of it. To me, this applies to computers and technology. To Daniel Tammet, this applies to numbers and letters, on a far, far more vast scale. Daniel is a savant, an extraordinary person who pushes the boundaries of what we believe we know about human capability and learning. What he has to say rings a bell, because how he views his numbers and letters is similar to how I understand the inner workings of a computer. I can visualize what is going on, or what I want to happen. I can do this because I’ve worked and studied the computer field for years, and because I enjoy the work. What I’ve found is that there is a distinct difference between understanding the technology and memorizing what happens when you click a button.

If you work in the tech industry, memorizing technology is a bad idea. Technology changes, it evolves and grows. It is better to understand the attitudes and purpose of the technology. Networking is a great example. I started out learning about wave propagation theory in the Navy, and about how we could get data from the size, shape, or velocity of the wave. Later, when I went on to learn TCP/IP networking, I found that the data was still transmitted the same way, as 0 or 1, but how it was decoded was different. Then the stack, then the applications, then scripting, and now, I’m learning a high level programming language, and its finally starting to click.

The thing is, I couldn’t have learned these skills if I didn’t have a visual image in my mind about how it worked under the gloss of the computer screen. I can’t imagine trying to use a computer, much less program one, without at least a passing knowledge of what happens when you click that mouse.

Then again, to 99.999% of people, it doesn’t matter, and really shouldn’t. Computers should be so easy to use that you don’t have to learn a new skill to use one effectively. They should be as self explanatory as toasters. Unfortunately, they are not. Windows 7 is coming out this year some time, and it will sport a new user interface which its users will have to learn all over again. Many, many of them will simply try to re-memorize what button does what, and what order to click things in. You shouldn’t have to learn why the computer works the way it does, but it certainly doesn’t hurt. In the end, it makes things much easier too.

The Coffee Cup

I’ve had this coffee cup on my desk at work for the past year or so now. It’s just a plain white cup, with the Ubuntu logo on it. I got it from CafePress. I loved it, for one, because the Ubuntu logo is great. Best Linux logo out there. I also loved it because as I was thinking about how to solve one problem or another, the cup was normally there with hot coffee waiting to be sipped as I pondered the solutions. Today I picked up the cup, walked towards the coffee pot, and dropped the cup. My wonderful Ubuntu coffee cup shattered as it hit the floor.

I loved that cup, so I didn’t want to break it. However, it seems appropriate, as today I also switched back to Windows at work. I’ve been running Ubuntu as my primary desktop at work for several months, and running XP in VirtualBox when needed. Lately, I’ve been needing the VM more and more, as I do more diagramming and planning in VMWare Infrastructure Client and Visio, both Microsoft centric applications. Also, rumor has it that in the next couple of months we will be replacing our aging Lotus Notes servers with Microsoft’s Exchange 2007. IBM released a Linux native Notes client which supports Ubuntu, and really works great. When we made the switch to Exchange, I was hoping to use the Evolution client that comes with Ubuntu. Unfortunately, Microsoft changed the MAPI standard for communicating with the server in Exchange 2007, and there is no supported Linux client. Which left me with two choices. Run Outlook in my VM, or moved everything back to Windows and conform to company standards. I debated this in my head for a couple of weeks, but in the past three days I’ve had X crash on me three times in Ubuntu. When X crashes, it takes all of my X applications with it, along with the data… it’s like Windows ‘95 all over again.

X crashing for no apparent reason was the nail in the coffin for me. I moved all my data over with a USB drive, and Monday I’ll format the Linux partition and fdisk /mbr from the XP recovery console.

I’ve really enjoyed using Linux, but honestly, it’s kind of relieving to be back in a supported environment again. There are still quite a few desktop tools missing from Ubuntu that are available on Macs and Windows. My current favorite so far is Evernote, with the aforementioned Visio running a close second. Launchy is nice… not as nice as Quicksilver or Gnome-Do, but nice.

Mentioning Gnome-Do brings up another point. Gnome-Do has been acting up lately, catching on something or other and eating up 99% CPU. The developers are aware of the problem, and are working on a solution. However, using Gnome-Do as an example, the very idea of what they are doing with “Release Early, Release Often”, completely goes against the grain of a business desktop. Any Linux desktop will contain beta-quality code, and when I’m relying on a computer to do my job, I can’t have it acting as a beta tester. Ubuntu is doing lots of cool stuff with 3D desktops and cutting edge software, but I don’t need it to be cool, I need it to work. Reliably.

One last note about why I’m not using Ubuntu at work any more. My computer is a Dell laptop, mostly used in a docking station, attached to a 22 inch monitor. I noticed after a while that my laptop was getting really hot in the docking station, and I couldn’t tell if Ubuntu was reading the docking station correctly or if it was displaying on both the internal monitor and the external monitor. When I popped the lid on the laptop, the monitor either came on suddenly or was on the entire time, and the keyboard was hot to the touch. In the Gnome “Screen Resolution” preferences I found that I could turn the monitor off from there, and I think that solved that issue, but I’m not sure. I’d hate to think that I was actually causing the hardware harm by running linux on it. I don’t want to spread FUD, but if its true, its true. When I’m running Windows, I don’t have that problem at all.

So, now I’m looking for a new coffee cup… something to inspire me, and be my companion in my little beige box. Whatever the new design is, it needs to be something that will last, something reliable, and something that’s in it for the long haul. Ubuntu has been good to me, both the OS, and the coffee cup, but in the end, they both broke, and I’ve got to move on.

AutoYast

I wrote this last year and never posted it. I’m glad I found it and can post it now.

Nagios Check Scheduling

Or, maybe a better title for this would be “They rebooted the server, why didn’t I get a page?” I’ve had that question asked of me a few times, and I’ve never had a good answer, so I thought I’d take a closer look at Nagios and see what is going on.

End of an Era

The hard thing about keeping a job in the technology field is that it is constantly changing. Just this past summer $WORK fired several mainframe workers who could not keep up. They got stuck on one technology that they knew how to operate, and failed to evolve when the field did. Now I think its clear that another sector of the job market is on its way out, the one that I, and thousands of others occupy, the job title of systems administrator.

System P

The more I learn about IBM’s P-Series UNIX systems, the more impressed I am. I’ve been a very harsh critic of them in the past, but that may have just been my ignorance of the platform. The P is, no doubt, expensive… however, when you look at what it can do, and at how many x86 systems you’d need to do the same thing, the P begins to justify its cost.

As an example, we are looking at building a new web hosting environment off of WebSphere. To accomplish this, we are looking at four database servers (DB2), and between six and eight application servers. The total cost for the project, not including the F5 switch, I’d imagine to be somewhere around $100,000. With that money, we could purchase one P-Series that would do everything we need one one box. That equates to less cabling, less administration, less network overhead, and a smaller footprint for the PCI auditors. One box, maybe four Logical Partitions (LPARs), and that’s it.

AIX, IBM’s version of UNIX, is another big win for the P-Series. Creating a m ksysb creates a bootable DVD clone from a running system. So, you can clone an LPAR and install it along with all the applications you have installed on a new P-Series. Very impressive, and I wish more systems had this feature built in. AIX has is peculiarities. SMITTY, the administration interface, is confusing and difficult to navigate, and expanding a logical volume on the fly requires more steps than I think should be necessary. Many of the shortcomings of AIX can be solved by installing the AIX Toolbox for Linux, which includes a lot of the basic Linux tools compiled for AIX. Like bash… I can’t live without my tab- completion and vi keyboard bindings! On the whole, AIX is an extremely stable operating system. Configuration is more complex than other systems, but once it’s set up, you can let it run for years without intervention.

I’ll be getting more in-depth with the a P550, P561, P570, and one more I’m not sure of the model number of. The next couple of months should be interesting.

The Linux Box and Upgrading Java

As a general rule, I really don’t like to go outside of the box when it comes to Linux. And by that, I mean that I don’t like going outside of what is provided by what ever distribution you are using, be that SLES, Red Hat, or Ubuntu. A lot of people put a lot of work into making sure that the packages that are available for the distribution actually work in the distribution and do not interfere with any other apps. Linux will let you do what ever you want, but just because you can do something, doesn’t mean that you should.

Going outside the box can have disastrous results with Linux. Back in early 2000 and 2001 when I was installing SuSE and Mandrake on my old IBM box, I wound up in dependency hell more than once. If you’ve never been there, it goes something like this:

OK, I want to upgrade my music player to the latest version, so I’ll download the latest RPM. Wait, that failed, because it depends on a newer version of some library file that I don’t have, so I’ll go search the Internet and try to find that. OK, found it, downloaded the rpm, and it failed to install because it depends on a newer version of some other library file that I don’t have. Looks like there’s no RPM for that library, so I’ll download the source code and compile it. OK, ./configure; make; make install; Nope, that failed because of a gigantic list of dependencies that are not available! At this point, you have to make a decision: Do you go ahead and find the dependencies, or do you give up and have a drink instead. If you choose to go ahead, you download the source to a dozen different packages and install them, then compile your library, then compile your other library, then go to install the rpm to find that it fails because one of the applications you upgraded along the way is, get this, too new to support your music player, and the install still fails. Oh, and by the way, half of your other apps that used to work, don’t work anymore.

Creative Uses for Wordpress

Where I spend my days ($WORK), we have multiple monitoring systems for just about every service on every server that we have. Many of these are Nagios, some are built in, and others are SiteScope. All of the systems generate email alerts that either go to our pagers, our email, or both. From time to time, management would ask a question like “How many pages do you get in a week on average”, which up till a couple of months ago, our answer was always “It just depends”.

My Optimized Windows Workflow

I love Linux, I really do. Compared to the older UNIX systems like AIX, HP-UX, and Solaris (who is trying really hard to catch up) Linux is head and shoulders above the rest. The main reason for this is that a lot of really smart people also love Linux, and try their best to make it the best server on the planet. For the most part, I’d agree that we are succeeding on that front. On the other hand, to date, I simply can’t run Linux on my desktop. If there are servers down, or an application fault somewhere, I need to be able to rely on my tools to be there for me. That’s why I run XP on my laptop.

License Restrictions

Software licensing is one of the biggest expenses of high-end server systems. The vendors charge you not only to use the software, but they charge you for how efficiently you want to use the software as well. IBM, for example, charges a different license fee for AIX determined by how many cpus are in the system. So, to scale in response to load, weather its up or out, you have to pay for additional hardware, and then you have to pay for the ability to use that hardware. We are not talking small numbers here either, we are talking in the upwards of six figures 1, in addition to the cost of the hardware. In addition to that, if you are using proprietary applications on top of the OS, you are going to have to pay additional licensing fees for those as well. WebSphere in particular charges on a per cpu basis.